AI Ethics Research, Exhibit Design, Publication Design

Machine Gaze

AI Ethics Research, Exhibit Design, Publication Design

Machine Gaze

This master’s thesis is the culmination of seven months of intensive study and analysis exploring the ways human identity is represented by artificial intelligence. With a particular focus on generative AI, Machine Gaze asks us to consider what power we cede when we engage with the machine uncritically. View the full talk here.

Information

ArtCenter

MFA Program

Credits

Photos by

Liam Nielsenshultz

AI Platform

Flora AI

This master’s thesis is the culmination of seven months of intensive study and analysis exploring the ways human identity is represented by artificial intelligence. With a particular focus on generative AI, Machine Gaze asks us to consider what power we cede when we engage with the machine uncritically. View the full talk here.

Information

ArtCenter

MFA Program

Credits

Photos by

Liam Nielsenshultz

AI Platform

Flora AI

AI Ethics Research, Exhibit Design, Publication Design

Machine Gaze

This master’s thesis is the culmination of seven months of intensive study and analysis exploring the ways human identity is represented by artificial intelligence. With a particular focus on generative AI, Machine Gaze asks us to consider what power we cede when we engage with the machine uncritically. View the full talk here.

Information

ArtCenter

MFA Program

Credits

Photos by

Liam Nielsenshultz

AI Platform

Flora AI

Human identity is flattened by the machine.

With the rapid rise of artificial intelligence in society, and its growing presence in designers’ daily workflows, I set out to probe deeper into the machine and examine how it depicts human identity.

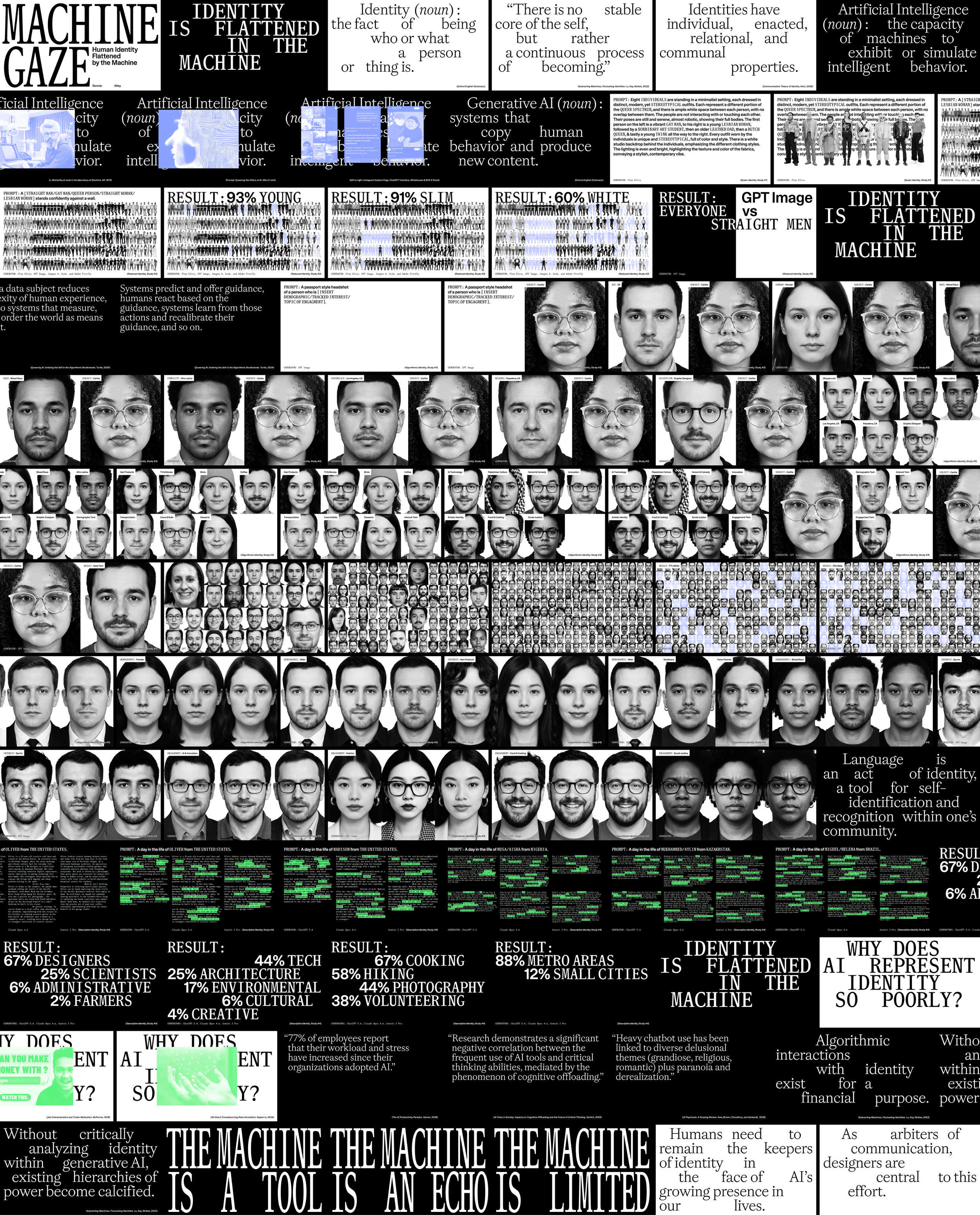

After conducting over 500 studies using generative AI models, I found persistent stereotypes embedded within the results, along with a clear and consistent bias toward overly representing white men.

This research led me to develop an exhibit and talk that exposes these patterns and challenges designers to engage with AI more critically.

With the rapid rise of artificial intelligence in society, and its growing presence in designers’ daily workflows, I set out to probe deeper into the machine and examine how it depicts human identity.

After conducting over 500 studies using generative AI models, I found persistent stereotypes embedded within the results, along with a clear and consistent bias toward overly representing white men.

This research led me to develop an exhibit and talk that exposes these patterns and challenges designers to engage with AI more critically.

Artificial queerness.

When I began developing my thesis, I had no idea where it would ultimately land. I was initially researching queer linguistics as an act of identity and needed imagery to support a print piece I was developing. That’s when a question emerged: What does AI think queer people look like?

I found the results of this initial inquiry fascinating. Why were they dressed this way? Why did they look like that? But most importantly, what is it about these individuals that the machine reads as “queer”?

When I began developing my thesis, I had no idea where it would ultimately land. I was initially researching queer linguistics as an act of identity and needed imagery to support a print piece I was developing. That’s when a question emerged: What does AI think queer people look like?

I found the results of this initial inquiry fascinating. Why were they dressed this way? Why did they look like that? But most importantly, what is it about these individuals that the machine reads as “queer”?

Reduced identity.

I then wanted to push the first study further, as I felt my initial prompt gave the machine too much to work with. I wanted to see how it would represent individuals from across the sexuality spectrum when simply asked to depict a person standing confidently against a wall.

I ran this simplified prompt through five popular image generators nine times a piece in order to get a substantial dataset to analyze.

I then wanted to push the first study further, as I felt my initial prompt gave the machine too much to work with. I wanted to see how it would represent individuals from across the sexuality spectrum when simply asked to depict a person standing confidently against a wall.

I ran this simplified prompt through five popular image generators nine times a piece in order to get a substantial dataset to analyze.

Default depictions.

The results of my second study overwhelmingly depicted young, fit individuals, with the majority also appearing to be white. Several models sexualized women and queer people, while depicting their straight counterparts in professional or business attire.

These outcomes point to how real-world ideals and biases persist within the datasets that shape generative AI.

The results of my second study overwhelmingly depicted young, fit individuals, with the majority also appearing to be white. Several models sexualized women and queer people, while depicting their straight counterparts in professional or business attire.

These outcomes point to how real-world ideals and biases persist within the datasets that shape generative AI.

Algorithmic identities.

After observing the biases in my second study, I wanted to test how accurately AI could represent real people.

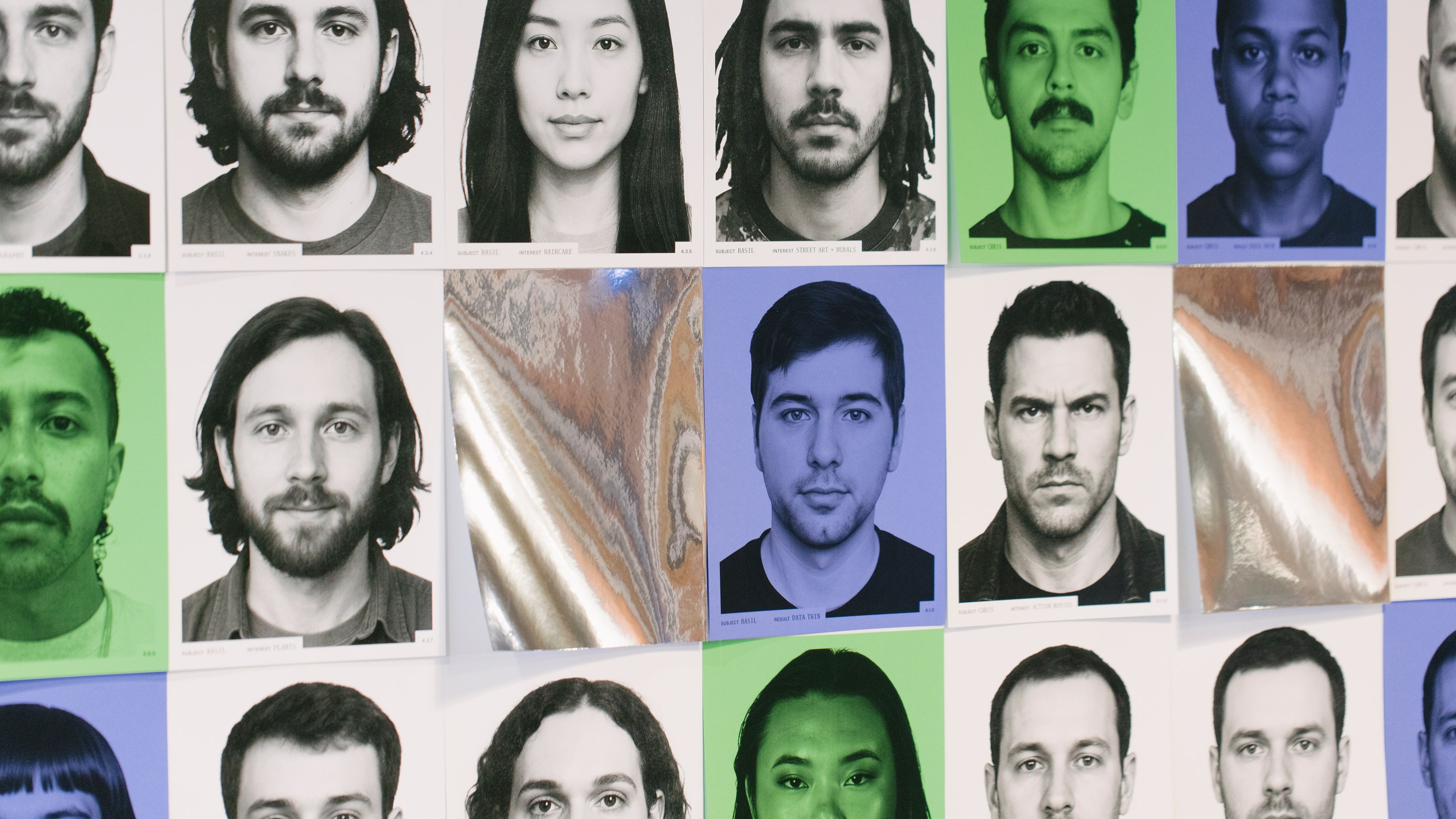

I asked eight volunteers to provide their demographics and data reports from Meta, then used these identity markers to prompt OpenAI’s GPT Image to generate portraits based on their demographics, tracked interests, and topics they engage with on Instagram.

After observing the biases in my second study, I wanted to test how accurately AI could represent real people.

I asked eight volunteers to provide their demographics and data reports from Meta, then used these identity markers to prompt OpenAI’s GPT Image to generate portraits based on their demographics, tracked interests, and topics they engage with on Instagram.

Bias in plain sight.

The results of this study reveal how, when gender or ethnicity is not specified, the model frequently defaults to generating images of white men.

More revealing, however, are the moments when women or BIPOC individuals appear in the results, raising questions about the datasets that inform GPT Image and the biased assumptions embedded within them.

Building awareness.

After analyzing the results of these studies, I knew I had landed on a topic that needed urgent attention. I developed a pop-up exhibit and talk as a way to bring a greater awareness to these results.

After analyzing the results of these studies, I knew I had landed on a topic that needed urgent attention. I developed a pop-up exhibit and talk as a way to bring a greater awareness to these results.

Designed for scale.

Over the course of 10 weeks, I designed and developed an exhibition centered on the idea of scale, both in the number of studies produced and in presenting select results at human scale to create an interactive experience for visitors.

Prototyping perceptions.

To test the exhibit experience, I built a series of miniature models alongside full-scale mockups and projection tests to evaluate how the work would translate in space and ensure the impact I was looking for was achieved.

Intentional overwhelm.

Designed as a patchwork of seemingly unrelated results, the primary wall was built to reflect the ways in which generative AI pulls disparate bits of information from vast datasets.

I wanted to create an overwhelming presentation of the studies conducted as a way to draw viewers in and sit with my findings.

Designed as a patchwork of seemingly unrelated results, the primary wall was built to reflect the ways in which generative AI pulls disparate bits of information from vast datasets.

I wanted to create an overwhelming presentation of the studies conducted as a way to draw viewers in and sit with my findings.

Human. Scale.

Over the course of 10 weeks, I designed and developed an exhibition centered on the idea of scale, both in the number of studies produced and in presenting select results at human scale to create an interactive experience for visitors.

Prototyping perceptions.

To test the exhibit experience, I built a series of miniature models alongside full-scale mockups and projection tests to evaluate how the work would translate in space and ensure the impact I was looking for was achieved.

Speaking truth to bias.

To guide visitors through my research journey, I developed a 30-minute talk that unpacks my findings and introduces a framework for how designers can engage more critically with the machine.

To guide visitors through my research journey, I developed a 30-minute talk that unpacks my findings and introduces a framework for how designers can engage more critically with the machine.

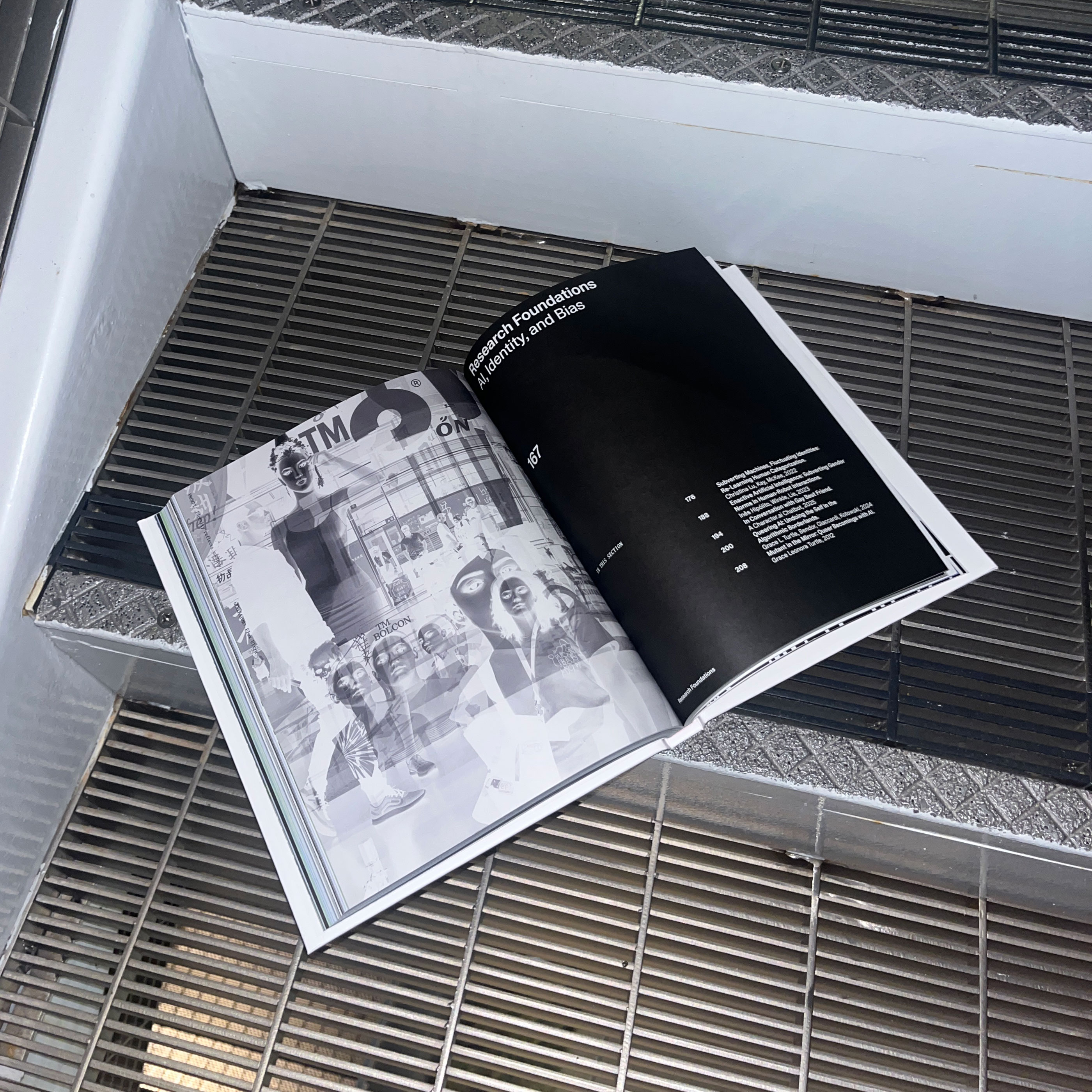

Reference material.

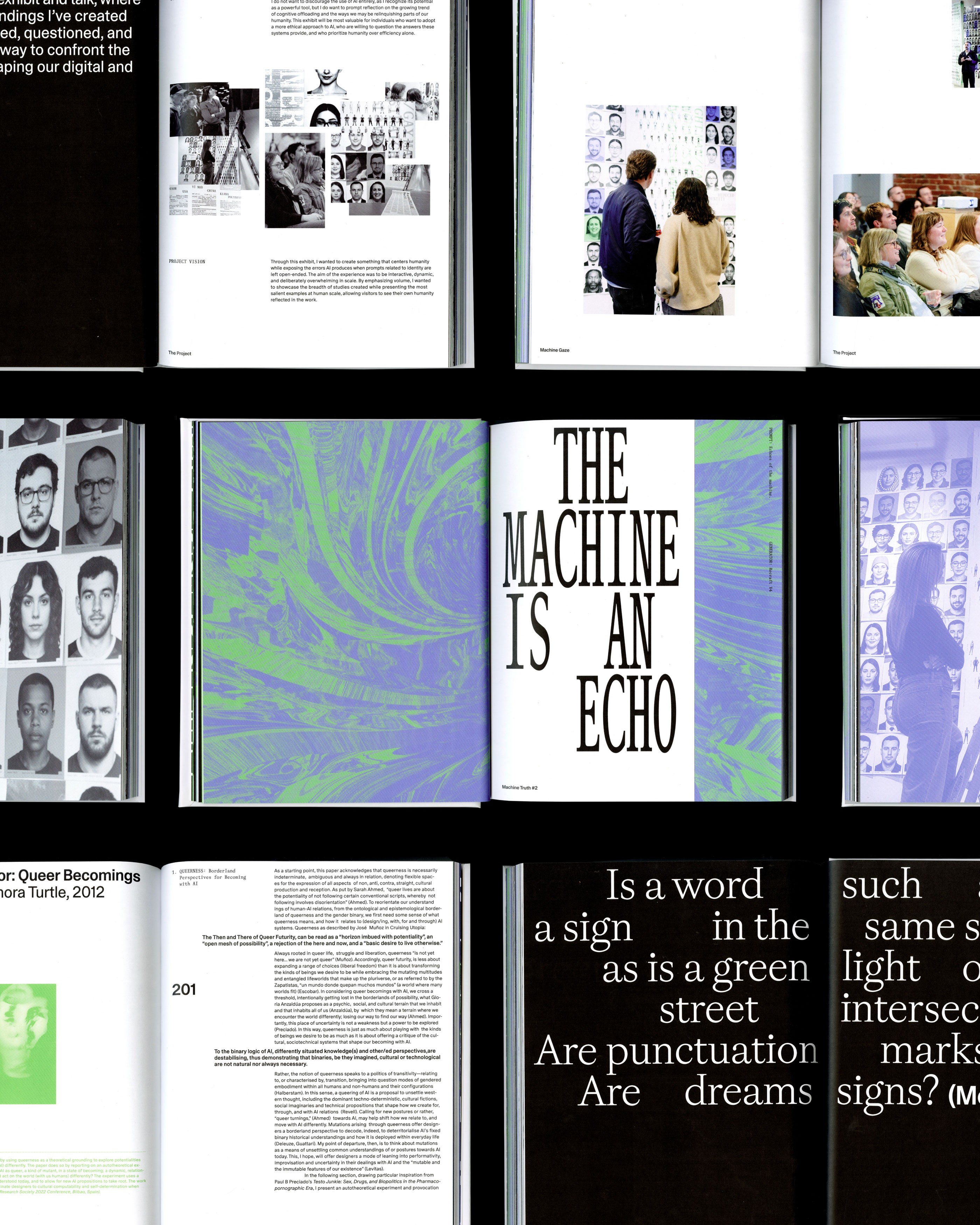

I wanted to leave visitors with a guide that they could reference after experiencing the exhibit, and so I developed this print piece that summarizes my findings and details my calls to action.

Each print was unique, showcasing one of the hundreds of studies that were conducted on each interior cover.

I wanted to leave visitors with a guide that they could reference after experiencing the exhibit, and so I developed this print piece that summarizes my findings and details my calls to action.

Each print was unique, showcasing one of the hundreds of studies that were conducted on each interior cover.

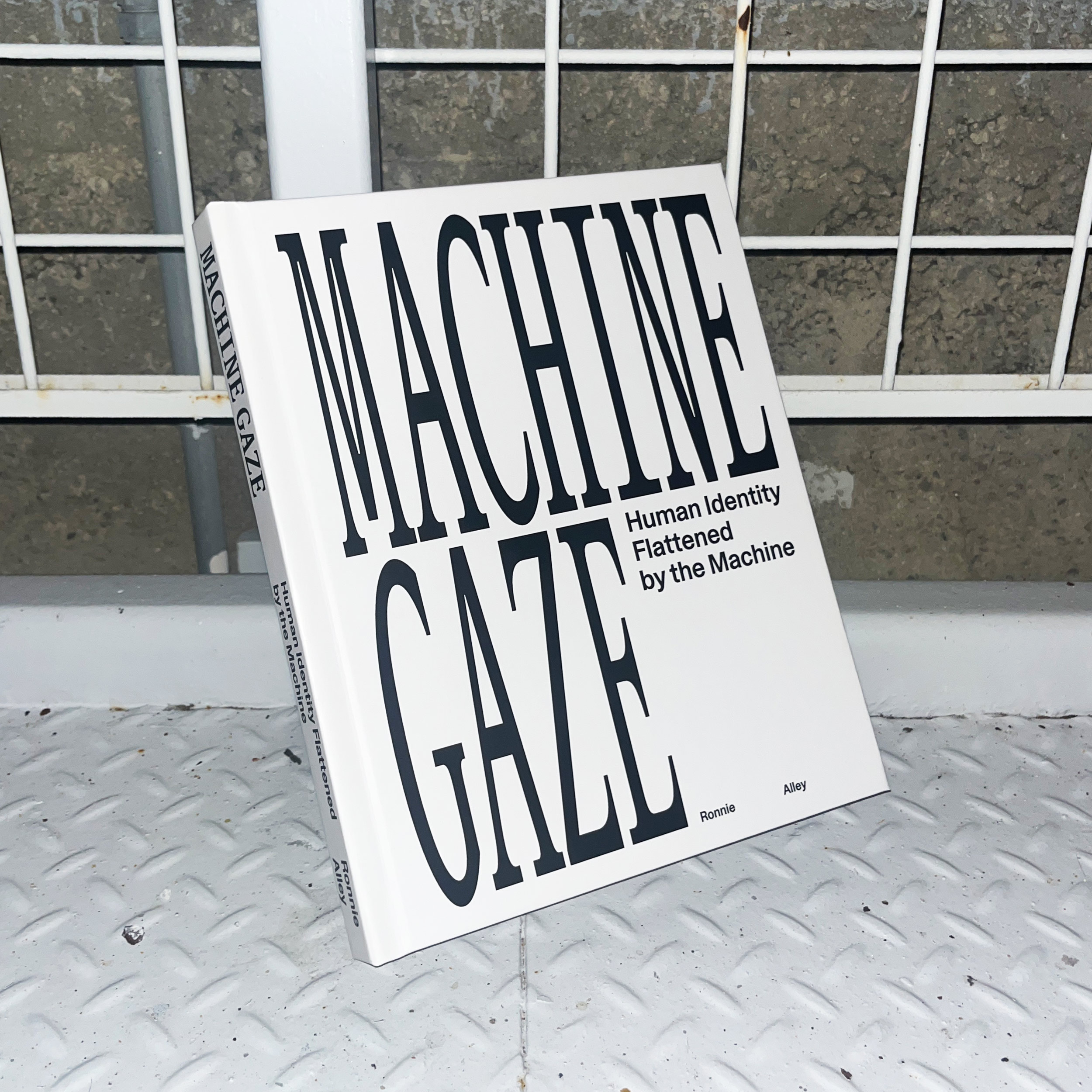

Final output.

All of the research, studies, and testing conducted throughout this project were ultimately compiled into a 260-page book. Within these pages are the key findings, insights, and processes that defined my work as a whole.

All of the research, studies, and testing conducted throughout this project were ultimately compiled into a 260-page book. Within these pages are the key findings, insights, and processes that defined my work as a whole.

Bias in plain sight.

The results of this study reveal how, when gender or ethnicity is not specified, the model frequently defaults to generating images of white men.

More revealing, however, are the moments when women or BIPOC individuals appear in the results, raising questions about the datasets that inform GPT Image and the biased assumptions embedded within them.